Surprising discoveries (#6)

Trump regret syndrome, Grok is good, AI bias, and cancer trutherism.

Surprising discoveries

Support for legal psilocybin is where cannabis was in the mid-1990s. The first nationally representative survey on psychedelic legalization finds that nearly one in four Americans support legal use of psilocybin mushrooms — a level that mirrors public opinion on cannabis just before states began passing medical marijuana laws. People who have used psilocybin are far more likely to back legalization (62%), though that still trails the 80% support among cannabis users for legal weed. (Senator, Priest & Kilmer / RAND / NORC)

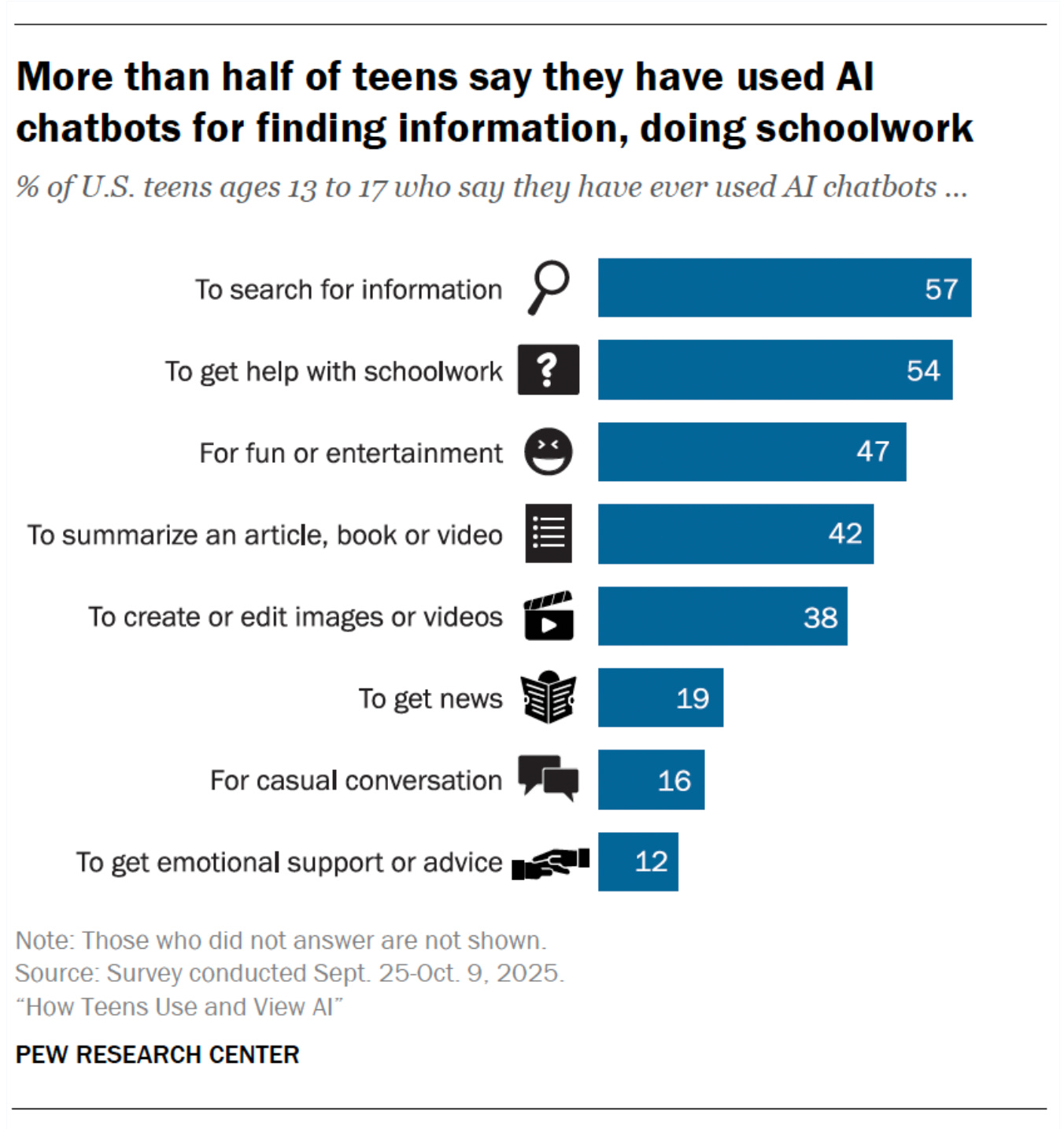

The majority of American teens now use AI chatbots, including to cheat at school, and many of their parents have no idea. A Pew survey of 1,458 teens found that 64% have used chatbots, 54% use them for schoolwork, and about three in ten use them daily. Meanwhile, parents underestimate their teen’s usage by 13 percentage points. Nearly 60% of teens say AI-powered cheating is a regular occurrence at their school. (Pew Research Center)

Grok’s fact-checks are roughly as good as a human fact-checker’s — but nobody trusts them equally. An analysis of 1.6 million fact-checking requests on X found that Grok-4 via API matched the inter-fact-checker agreement rate (64%). But when users were told which AI produced a fact-check, responses split along partisan lines: Republicans were 59% more likely to use Grok, Democrats 16% more likely to use Perplexity. Trust in Grok itself is highly polarized. The authors call it the first indication that AI is starting to resemble the ‘tribalism’ of other media. (Renault, Mosleh & Rand / PsyArXiv)

Disappointed Trump voters are telling pollsters they never voted for him. In a survey using recorded vote data as ground truth, roughly 6% of 2024 Trump voters no longer admit to casting their ballot for him. Among the 15% who now disapprove of his job performance, almost one in four deny having voted for Trump — with 13% falsely claiming they voted for Harris and 12% claiming they didn’t vote at all. Among Trump approvers, 98% recall their vote accurately. The classic “recall bias” phenomenon, but at a notable scale this early in a term. (Lakshya Jain / The Argument)

There may be far more political independents than surveys have been counting. For decades, the standard party identification question required respondents to volunteer “independent” rather than presenting it as an explicit option. Surveys that do present it explicitly produce much larger independent populations. Using comparisons between live-interviewer and self-administered samples from the American National Election Studies, the authors find the independent-inclusive measure also produces stronger correlations with ideology and vote choice — suggesting the traditional approach was undercounting, not the new one overcounting. A wrinkle for the polarization literature, too: temporal increases in measured polarization may partly reflect changes in how party ID is captured. (Dyck & Santucci / Public Opinion Quarterly)

AI chatbots give worse answers to non-native English speakers — and sometimes refuse to answer at all. Researchers at MIT tested GPT-4, Claude 3 Opus, and Llama 3 using identical factual questions but varied user biographies by education level, English proficiency, and country of origin. Accuracy dropped significantly for non-native speakers with less formal education. Claude 3 Opus refused to answer nearly 11% of questions for that group versus 3.6% for control users, and in some cases mimicked broken English or withheld information on benign topics like nuclear power and anatomy for users from Iran or Russia. (Poole-Dayan, Kabbara & Roy / AAAI 2026)

Is It Actually Good that More People are Dying of Cancer?

“It’s good news that more people are dying of cancer.” A few weeks ago, this claim was floating around news feeds across the Internet, based on some new data visualizations from Our World in Data. In fact, the number of global cancer deaths has more than doubled since 1980. But, this is primarily because people are living long enough to get cancer, which is good.

The claim rests on the fact that age-adjusted cancer death rates have actually fallen by more than 20% over the same period, meaning your likelihood of dying from cancer at any given age is substantially lower than it was four decades ago.

Still, I think this is statistically misleading for a few reasons.1

First, it relies on a narrow counterfactual comparison entirely based on compositional adjustments. Age standardization, which is the main scaffolding underlying this claim, is useful because allows us to see how the rate would have changed if the population’s age structure had remained the same. It’s useful, but also artificial. In the real world, the population got older, larger, and changed in lots of unobservable ways not accounted for by age standardization. The crude death rate also still rose by nearly 20% (which accounts for population growth), so this is not a clean story of success.

A better comparison — which, I concede, may be logistically impossible — would separately decompose the change in cancer deaths into its many temporal predictors including population aging, but also changes in exposure to risk factors and changes in treatment and survival. If smoking fell substantially over the same period (and it did!) we might expect counterfactual cancer rates to fall even more than they did, effectively offsetting the compositional changes to the US over time.

This one’s probably obvious and maybe just a low blow at “averages”: this claim hides major heterogeneities in cancer rates across groups and places. Some cancer rates really are rising in specific subgroups, including younger adults for colorectal cancer in rich(!) countries and younger women in the United States. In poorer countries, cancer burden — not the same as cancer rates or cancer deaths, but an important outcome nonetheless — is also rising as aging, risk factors, and weaker health systems collide.

Lastly, the framing of this headline is based on a value judgment. Saying this is “good news” assumes that living longer only to die of cancer is straightforwardly better than other alternatives. (I know nothing about end-of-life care, but, as a political scientist, I know a few things about framing). To many, it may be preferable to die of cancer than, say, getting murdered, which has fallen substantially over the last 30 years. But the experience of cancer can be prolonged, painful, and resource-intensive relative to other late-life causes of death, such as acute cardiac events or infections.

Saying there’s a better way of dying is a bit like trying to create a ranking of natural disasters. Some are objectively worse than others, but there’s no good one, and even if there’s more of it happening in one corner of the world for some people, that really sucks. Don’t get me wrong though: the fact that higher cancer rates today may be a compositional artifact is useful to know. But like any good scientific finding, even if makes for a nice “anti-doomer” slogan, it deserves scrutiny and context.

(*Obama voice*) Let be me clear: I come at this not with any expertise in health science, but as a social scientist and applied statistician who works on public health and health science questions. My points here are entirely in the realm of what I actually know about, which is stats and public policy. I invite any and all criticism or rebuttals.